Authors:

(1) Hung Le, Applied AI Institute, Deakin University, Geelong, Australia;

(2) Dung Nguyen, Applied AI Institute, Deakin University, Geelong, Australia;

(3) Kien Do, Applied AI Institute, Deakin University, Geelong, Australia;

(4) Svetha Venkatesh, Applied AI Institute, Deakin University, Geelong, Australia;

(5) Truyen Tran, Applied AI Institute, Deakin University, Geelong, Australia.

Table of Links

Related Works, Discussion, & References

A. More Discussion on Related Works

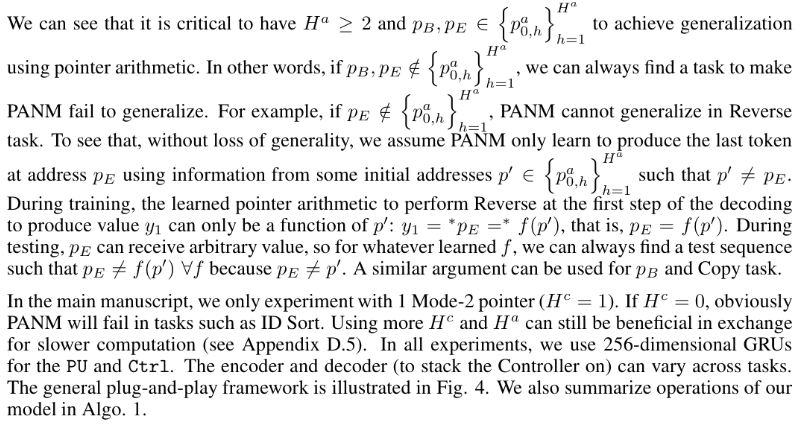

The proposed address attention in our paper is comparable to two known mechanisms: (1) location based attention [Luong et al., 2015, Dubois et al., 2020] and (2) memory shifting [Graves et al., 2014, Yang, 2016]. The former uses neural networks to produce attention weights to the memory/sequence, which cannot help when the memory grows during inference since the networks never learn to generate weights for the additional slots. Inspired by Turing Machine, the latter aims to shift the current attention weight associated with a memory slot to the next or previous slot. Shifting-like operations can handle any sequence length. However, it cannot simulate complicated manipulation rules. Unlike our PU design which obeys § 1’s principle II, the attention weight and the network trained to shift it depend on the memory content M. That is detrimental to generalization since new content can disturb both the attention weight and the shifting network as the memory grows.

Another line of works tackles systematic generalization through meta-learning training [Lake, 2019], while our method employs standard supervised training. These approaches are complementary, with our method concentrating on enhancing model architecture rather than training procedures, making it applicable in diverse settings beyond SCAN tasks. Additionally, the study by Hu et al. (2020) addresses syntactic generalization [Hu et al., 2020], a different problem compared to our paper, which emphasizes length extrapolation across various benchmarks. Notably, our paper considers similar baselines, such as LSTM and Transformer, as those examined in the referenced papers. There are other lines of research targeting reasoning and generalization using image input[Wu et al., 2020, Eisermann et al., 2021]. They are outside the scope of our paper, which specifically addresses generalization for longer sequences of text or discrete inputs.

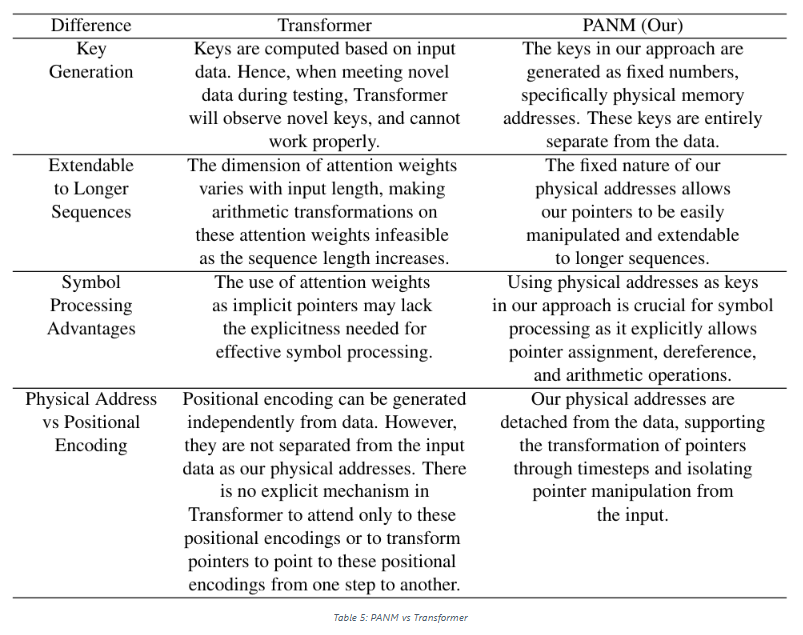

Our address bank and physical pointers can be viewed as some form of positional encoding. However, we do not use simple projections or embeddings to force the attention to be position-only. Instead, we aim to learn a series of transformations that simulate the position-based symbolic rules. At each time step, a new pointer ("position") is dynamically generated that reflects the manipulation rule required by the task (e.g. move to the next location), which is unlike the positional encoding approaches such as RPE [Dai et al., 2019] which aims to provide the model with information on the relative position or distance of the timesteps. We summarise the difference between our method and Transformer in Table 5.

B. More Discussion on Base Address Sampling Mechanism

We provide a simple example to illustrate how base address sampling help in generalization. Assume the training sequence length is 10, and the desired manipulation is p ′ = p + 1 (copy task). Assume the possible address range is 0, 1, ..., 19, which is bigger than any sequence length. If pB = 0 , the training address bank contains addresses: 0, 1, ...8, 9. Without base address sampling, the model always sees the training address bank of 0, 1, ...8, 9 and thus can only learn manipulating function for 0 ≤ p ≤ 9, thereby failing when testing address bank includes addresses larger than 9.

C. More Discussion on Model and Architecture

This paper is available on arxiv under CC BY 4.0 DEED license.